By David Pring-Mill

In his book “To Be a Machine,” author Mark O’Connell observed that a growing number of people view themselves in “mechanistic” or “instrumentalist” terms. They find fault in their own designs, or at least in their own functioning. They believe they might, one day, be redeemed of their deficient nature, which is a mythological and religious notion that majorly predates modern innovation. Cloaked in the language of this technologically-inclined group, it is a view of humans as devices, with the duty and destiny to be “upgraded.”

But if we are devices, then we, like popular smartphones, seem to have planned obsolescence. And thus, many “transhumanists” believe they can solve the “problem” of mortality by digitally uploading the “self” and propagating humanity, or some version of it, throughout the cosmos.

O’Connell responded critically: “It seemed to me that to speak of colonizing the universe – of putting the universe to work on our projects – was to impose upon the meaningless void the deeper meaninglessness of our human insistence on meaning. I could imagine no greater absurdity, that is, than the insistence that everything be made to mean something.”

This grappling over existential meaning is premised on the notion that emerging technologies will satisfy particular goals, standards, and dimensions. These expectations and distortions linger over research and development like a cloud. Technical jargon is frequently invoked as an integral part of this process and, therefore, philosophical meaninglessness and linguistic meaninglessness become somewhat linked.

As tech buzzwords are used and created, there are often layers of presumption when it comes to empirical evidence, the scope of use cases, the probability of outcomes, desirability among different stakeholders, and even ethical justifications. Innovators hope to change the world, or at least industries, in profound ways.

There are also powerful biases and vested interests in favor of the status quo, causing people to dismiss things that are already happening and observably so. It’s not surprising that people would seek to dismiss what is possible, given the contention over what is.

Clearer language could work to establish common ground.

As I argued in the Policy2050 whitepaper “Definitive and Meaningless: The Rise of Technical Jargon,” opaque terms are sometimes used deliberately, to create hype across marketing and sales campaigns. However, if there’s a disparity between the language of potential customers and vendors, marketing and sales might actually be negatively affected. More fundamentally, jargon might also cover up a lack of awareness and agreement over what is being built or changed.

This latter problem has no easy fix, and it isn’t always a problem, because innovation thrives in the unknown. Chaos is the playground for human imagination. Original, creative works are oftentimes a form of reordering. Although it’s sometimes contrary to institutional requirements, financing mechanisms, and professional incentives, there are times when the consensus or traditional understanding must be suspended in order for new ideas and things to develop.

Speaking about his own journey in life, Steve Jobs once famously remarked, “You can’t connect the dots looking forward; you can only connect them looking backwards.” The same might be said of humanity’s technological advancement and search for knowledge.

Jordan Peterson, a clinical psychologist who became a polarizing public figure after wading into gender politics, has remarked that entrepreneurs are typically lateral thinkers. Hearing an idea triggers off a bunch of other ideas and the entrepreneurs are constantly motivated by that pursuit, but their downfall can sometimes be insufficient organizational and administrative ability.

“There’s this weird tension between doing one thing right, which is what you need to do if you’ve already decided what it is that you’re doing, and scanning the landscape for something new to do that would be worthwhile. Those aren’t the same enterprises,” said Peterson, in an interview with entrepreneurial coach Rob Moore.

As startups grow, the managerial and administrative types tend to dominate. The companies lose that asset of lateral thinking and eventually fail. It’s worth noting here that this lens of organizational psychology may oversimplify things by dimming the wide array of material, political, and economic factors that also contribute to success or failure.

Peterson again references organizational psychology to explain why innovative ideas aren’t always embraced. Innovators are proud of their new and revolutionary products and think that these qualities will translate into an effective sales pitch.

“It is, if you’re talking to someone who’s entrepreneurial and risk-taking and interested in revolutionary ideas,” said Peterson. “But if you’re talking to a middle manager in a company, the last thing that person wants to hear is: well, you could be a risk-taker and introduce this into your company! The person is thinking: I don’t want to put my job or reputation on the line for your product, even if it is revolutionary, in part because if it succeeds, I probably won’t be rewarded for its success.”

In the same interview, Peterson said, “Thinking actually is conflict; it’s the pitting of opposing viewpoints against one another. And it’s very stressful and produces a tremendous amount of tension. But the question is, in part, do you want to figure that out in abstraction, even though that is very stressful, or do you want to live that out in the world?”

We cannot immediately find meaning and order in our thinking if the type of thinking that is required is antithetical to these very attributes. To introduce an additional layer of complexity, what happens to meaning when the emerging technology that we have created out of this unknown aims to entangle itself with thinking itself? Here, I am referring to Neuralink and neurotechnology.

Slavoj Žižek, the knowingly provocative Slovenian philosopher, sees problems ahead.

At a Nuit Blanche event in Toronto in the fall of 2012, he said, “When people tell me that nothing can be changed, my response is that no it can, because things are already changing like crazy. And what we should say is just this: If we let things change the way they are changing automatically, we are approaching a kind of new, perverse, permissively authoritarian society, which will be authoritarian in a new way.”

During a lecture at the University of Winnipeg in 2019, Žižek referred to Elon Musk, the founder of Neuralink, as “not a theorist but a popularizer.” (Musk prefers to characterize himself as a tireless engineer). Although the prospect of a highly advanced brain-machine interface has been dismissed as implausible, Žižek suggested “something is going on in this domain and I will limit myself to questioning the philosophical implications and consequences of this prospect.”

Žižek went on to argue that singularity has an implicit metaphysical dimension and the threat is obvious. He said that Ray Kurzweil, a central figure among the “transhumanist” group, simplifies things incredibly.

“Somehow, he presupposes that we still will be the same individuals who are doing it; he doesn’t even locate the danger,” said Žižek.

He wondered if a rewired human could be remotely controlled without even realizing the loss of free will. Responding to the idea of coffee machines that read thoughts and automatically begin making the desired drinks, Žižek remarked, “You lose this distance, gap, between yourself and reality; a gap which is the very basis of our thinking individuality.”

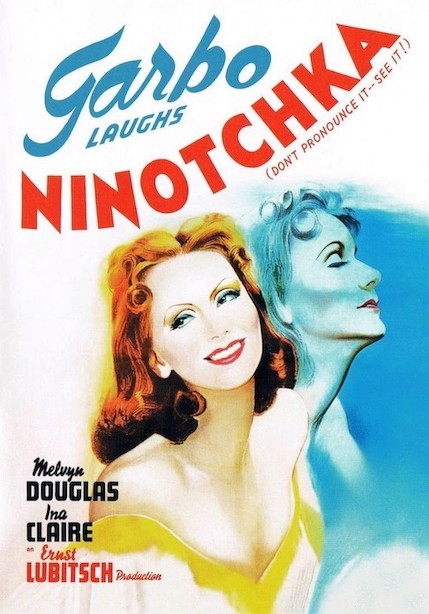

He recalled a joke from the 1939 film “Ninotchka.”

A guy enters a restaurant and asks the waiter, “Can I get a coffee but without cream, please?” The waiter responds: “Sorry, sir, we don’t have cream, we only have milk. So I cannot give you a coffee without cream, I can only give you a coffee without milk.”

Žižek often tells this joke in his lectures and debates. The audience laughs, then he explains, “This is what Hegel means by determinate negation.”

Although it’s the same coffee materially, a coffee without cream and a coffee without milk are not the same symbolically.

“Can the computer, into which your thoughts will be immersed, can it detect this difference?” Žižek wonders aloud.

I’m tempted to respond: can the average person?

And yet, there is the huge laughter elicited by this joke, quite reliably, at all of Žižek’s events. Perhaps this negation of properties underlies our subjective determinations and perceptions, and the laughter represents an alleviation of that tension. Perhaps the joke probes at our human need to neurotically categorize and compare everything. Perhaps it is illuminating Peterson’s view that thinking is an act of conflict. Perhaps the laughter is actually connected to an awareness that we, as consumers, have become needlessly demanding and businesses are accommodating us in these absurdities, to maintain profits or avoid social conflict. After all, many startups these days are trying to gain a competitive advantage by obsessing over the customer experience and personalization; though it’s worth noting that this joke also did well in 1939.

Either way, Žižek’s point seems to be that thinking is complex and may elude technological capture in its deepest, purest form. Toward the end of his self-admittedly meandering lecture, he said that people currently experience a division between their inner life and the reality out there, or the reality of others, the opacity of others; and if technologically-enhanced or addled minds become normalized, Žižek says “the most radical gap will be within me as a subject.”

When little is known about a proposed or still developing technology, perhaps any view is fair game. Though, in my view, the imperative to communicate deliberately does not go away. As people who are, in many respects, professional thinkers, both Peterson and Žižek seem to have ideas about the intersection of ideas with the world. So often, ideas begin, or at least gain traction, as words. If these inadequate, symbolic representations cannot fully capture intended meanings, can technologies?